Sequence Formatter

The Sequence Formatter Snap formats incoming documents from upstream Snaps to Hadoop sequence file format.

Overview

- This is a Format-type Snap.

Works in Ultra Tasks

Snap views

| Input/Output | Type of View | Examples of Upstream and Downstream Snaps |

|---|---|---|

| Input | The upstream Snap for Sequence Formatter should output map/table/key-value formatted data. Valid data types include String, Integer, Number and Boolean. | This Snap has at most one document input view. |

| Output | The Sequence Formatter Snap outputs binary data, so the downstream Snap must be a data store output Snap like (File Writer, HDFS Writer, etc.). | This Snap has at most one binary output view. |

| Error | This Snap has at most one document error view and produces zero or more documents in the view. | |

Supported Accounts

Accounts are not used with this Snap.

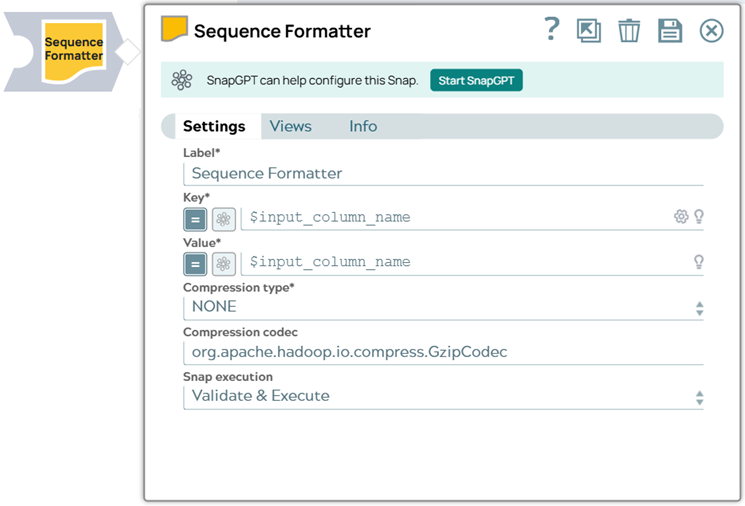

Snap settings

| Field Name | Description |

|---|---|

| Label* String |

Required. Specify a unique name for the Snap. Modify this to be more appropriate, especially if more than one of the same Snaps is in the pipeline. Default value: Sequence Formatter Example: Sequence Formatter |

| Key* String/Expression |

Required. JSON path for the key. Default value: [None] Example: $input_column_name |

| Value* String/Expression |

Required. JSON path for the value. Default value: [None] Example: $input_column_name |

| Compression type Dropdown list |

Sequence file compression type. The options available include:

Default value: [None] |

| Compression

codec String/Expression |

Fully qualified compression codec class name. Default value: [None] Example: org.apache.hadoop.io.compress.GzipCodec |

| Snap Execution

|

Select one of the following three modes in which the Snap executes:

Default value: Execute only Example: Validate & Execute |

Troubleshooting

Writing to S3 files with HDFS version CDH 5.8 or later

When running HDFS version later than CDH 5.8, the Hadoop Snap Pack may fail to write to S3 files. To overcome this, make the following changes in the Cloudera manager:

- Go to HDFS configuration.

- In Cluster-wide Advanced Configuration Snippet (Safety Valve) for

core-site.xml, add an entry with the following details:

- Name: fs.s3a.threads.max

- Value: 15

- Click Save.

- Restart all the nodes.

- Under Restart Stale Services, select Re-deploy client configuration.

- Click Restart Now.