Inserting data from a Databricks table into a Snowflake table

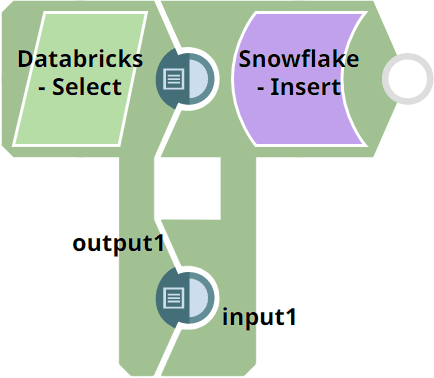

Consider the scenario where we want to load data available in a Databricks table into a new table in your Snowflake instance with the table schema in tact. This example demonstrates how we can use the Databricks - Select Snap to achieve this result:

To achieve this, we can use the Databricks - Merge Into Snap.

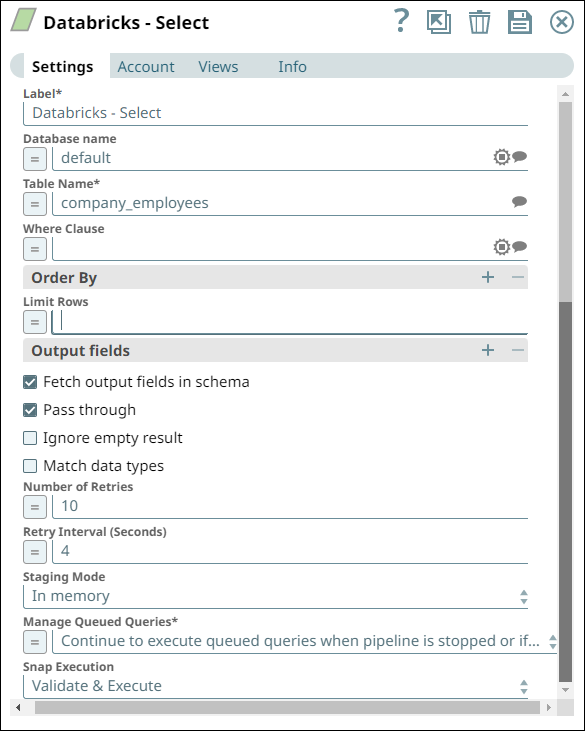

- In this Pipeline, we configure the Databricks - Select

Snap with the appropriate account, zero input views, and open two output views—one for

capturing the rows data and the other for capturing the table schema (as metadata). See

the sample configuration of the Snap and its account below:

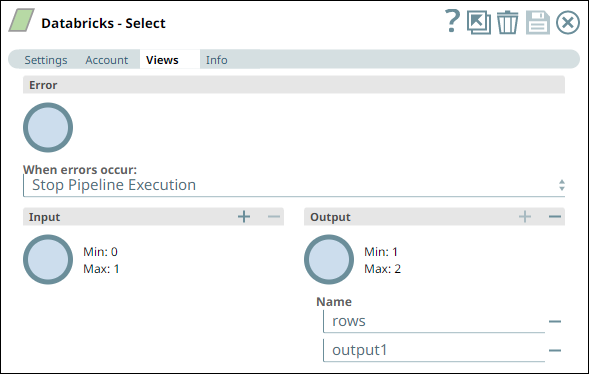

Databricks - Select Snap Settings Databricks - Select Snap Views

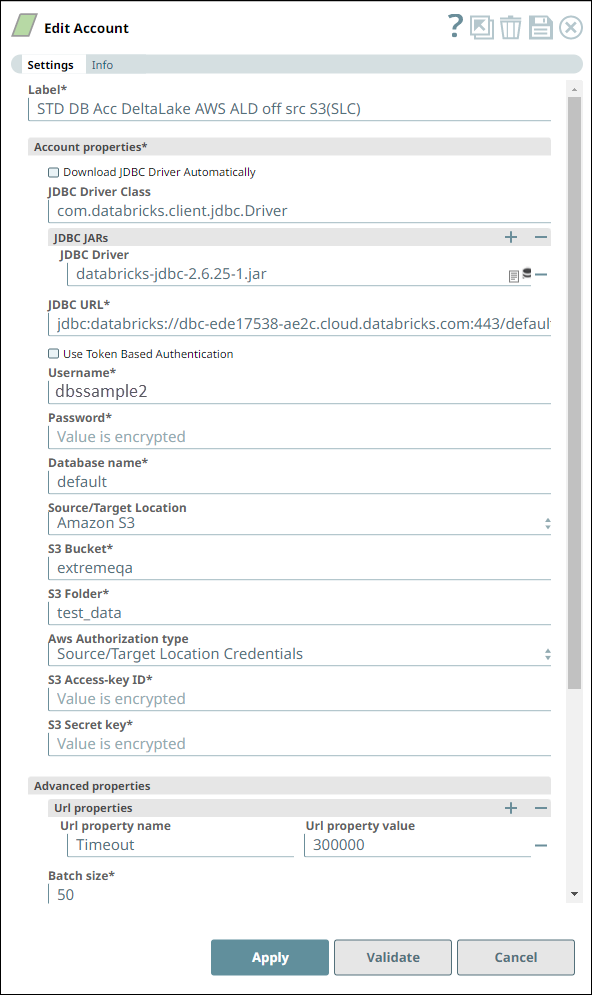

Account Settings for Databricks - Select Snap Notes

We configure the Snap to fetch the table schema (output1) and the table data (rows) from the example_company_employees table in the DLP instance.

The Snap uses a Databricks JDBC driver and the corresponding basic auth credentials to connect to the Databricks instance hosted on the AWS cloud.

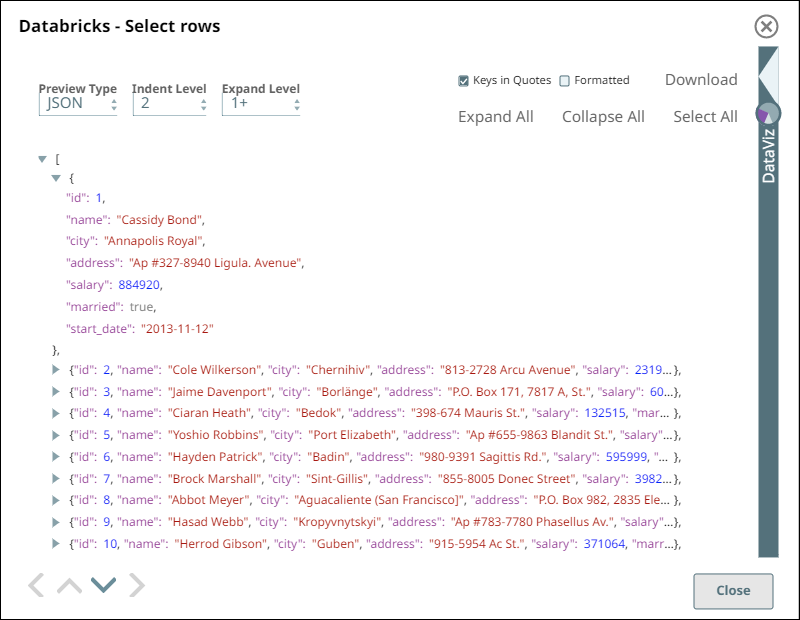

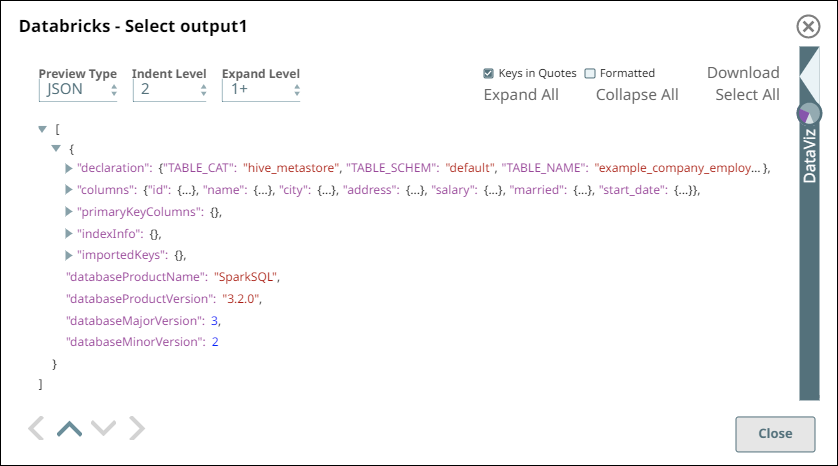

- Upon validation, the Snap displays the table schema and a sample set of records from

the specified table in its two output views. During runtime, the Snap retrieves all data

from the example_company_employees table that match the Snap’s configuration

(WHERE conditions, LIMIT, and so on).

Databricks - Select Snap Output View for Table data Databricks - Select Snap Output View for Table Schema

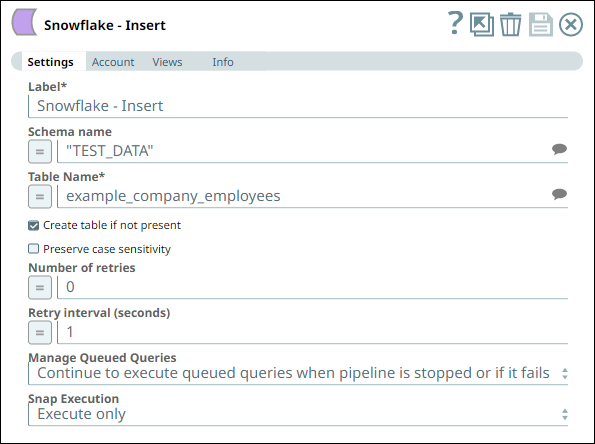

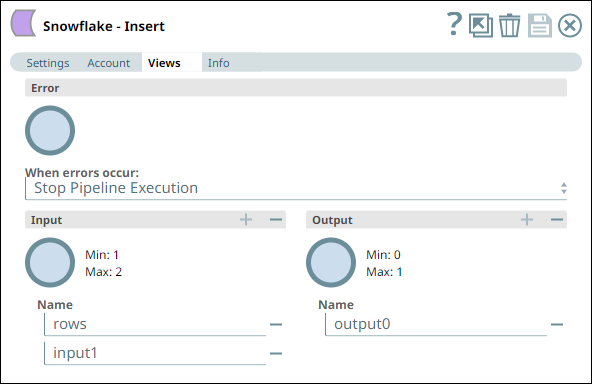

- Similarly, we use a Snowflake - Insert Snap from the

Snowflake Snap Pack with the appropriate account and

two input views to consume the two outputs coming from the Databricks - Select Snap. See the sample configuration of

the Snap and its account.

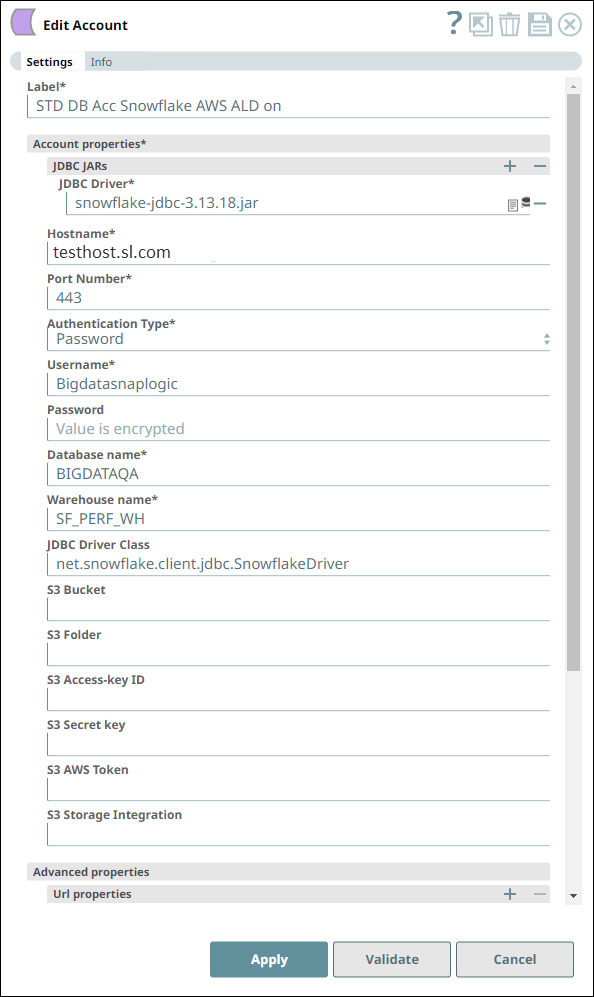

Snowflake - Insert Snap Settings Snowflake - Insert Snap Views

Account Settings for Snowflake - Insert Snap Notes

We configure the Snap to consume the table schema (output1) and the table data (rows) from the example_company_employees table in the DLP instance.

The Snap uses a Snowflake JDBC driver and the corresponding basic auth credentials to connect to the Snowflake (target) instance hosted on the AWS cloud.

The Snap is configured to perform the Insert operation only during Pipeline execution and hence, it does not display any results during validation. Upon Pipeline Execution, the Snap creates a new table example_company_employees in the specified Snowflake database using the schema from the Databricks - Select Snap and populates the retrieved rows data in this new table.

- Download and import the SLP file into your Environment.

- Configure Snap accounts.

- Provide Pipeline parameters, if any.