Databricks - Merge Into

Overview

You can use this Snap to run a MERGE INTO SQL statement based on the updates available in the source data files. In other words, this Snap allows you to perform a bulk UPSERT (UPDATE + INSERT) operation to update existing rows of a target DLP table and add new rows to the target table. The source of your data can be a file from a cloud storage location, an input view from an upstream Snap, or a table that can be accessed through a JDBC connection. The source data can be in a CSV, JSON, PARQUET, TEXT, or an ORC file.

-

COPY INTO - Enables loading data from staged files to an existing table.

-

CREATE TABLE [USING] - Enables loading data from some external sources like JDBC.

-

CREATE TABLE - Creates table in our case temporary table.

-

MERGE INTO - Inserts new rows, updates existing rows and delete by condition rows.

- This is a Write-type Snap.

Does not support Ultra Tasks

Prerequisites

- Valid access credentials to a DLP instance with adequate access permissions to perform the action in context.

- Valid access to the external source data in one of the following: Azure Blob Storage, ADLS Gen2, DBFS, GCP, AWS S3, or another database (JDBC-compatible).

Limitations

Snaps in the Databricks Snap Pack do not support array, map, and struct data types in their input and output documents.

Snap views

| Type | Description | Examples of upstream and downstream Snaps |

|---|---|---|

| Input | This Snap can read from two input documents at a time:

|

|

| Output | A JSON document containing the bulk load request details and the result of the bulk load operation. | |

| Learn more about Error handling. | ||

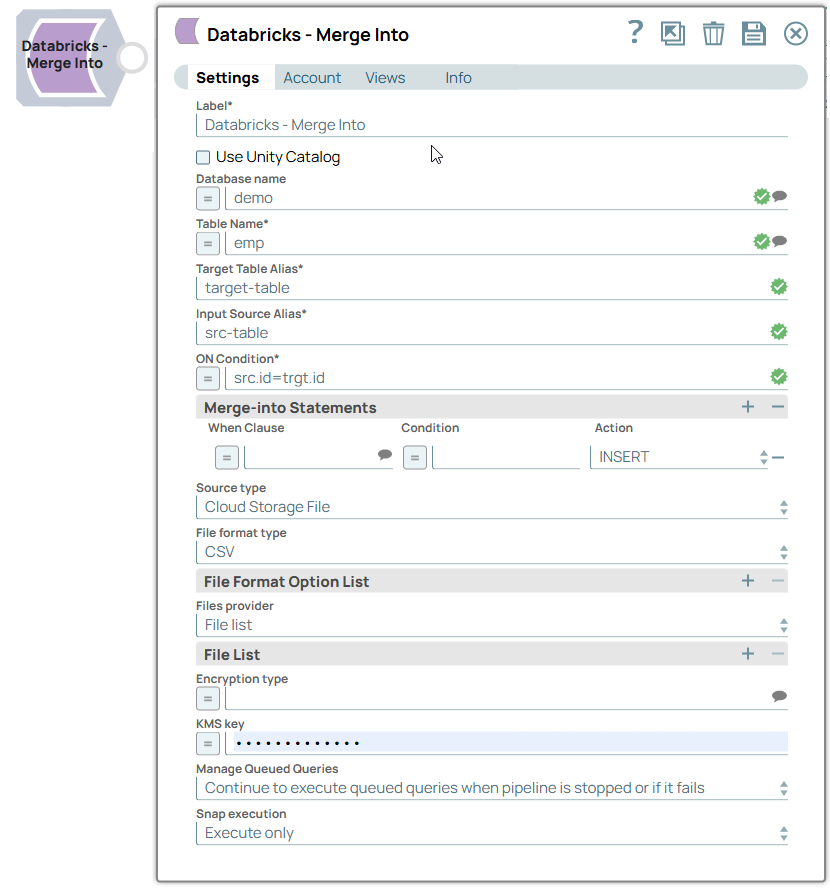

Snap settings

- Expression icon (

): Allows using pipeline parameters to set field values dynamically (if enabled). SnapLogic Expressions are not supported. If disabled, you can provide a static value.

- SnapGPT (

): Generates SnapLogic Expressions based on natural language using SnapGPT. Learn more.

- Suggestion icon (

): Populates a list of values dynamically based on your Snap configuration. You can select only one attribute at a time using the icon. Type into the field if it supports a comma-separated list of values.

- Upload

: Uploads files. Learn more.

: Uploads files. Learn more.

| Field/Field set | Type | Description |

|---|---|---|

| Label | String | Required. Specify a unique name for the Snap. Modify this to be more appropriate, especially if more than one of the same Snaps is in the pipeline. Default value: Databricks - Merge Into Example: Db_MergeInto_FromS3 |

| Use unity catalog | Checkbox | Select this checkbox to use the Unity catalog to access data from the

catalog. Default status: Deselected |

| Catalog name | String/Expression/ Suggestion | Appears when you select Use unity catalog. Specify the name of

the catalog for using the unity catalog. Default value: hive_metastore Example: xyzcatalog |

| Database name | String/Expression/ Suggestion | Enter the name of the database in which the target table exists. Leave this

blank if you want to use the database name specified in the Database Name

field in the account settings. Default value: None. Example: cust_db |

| Table name | String/Expression/ Suggestion | Required. Enter the name of the table in which

you want to perform the MERGE INTO operation. Default value: None. Example: cust_records |

| Target Table Alias | String | Required. Enter an alias name for the target table to

use in the MERGE INTO operation. Default value: None. Example: trgt_tbl |

| Input Source Alias | String | Required. Enter an alias name for the source table/data

to use in the MERGE INTO operation. Default value: None. Example: src_tbl |

| ON Condition | String/Suggestion | Required. Specify the condition on which the Snap should

update the target table with the data from the source table/files. Default value: None. Example: src.id=trg.id |

| Merge-into Statements | You can use this field set to specify the

conditions that activate the MERGE INTO operation and the additional conditions that

must be met. Specify each condition in a separate row. This field set contains the

following fields:

Note: The Snap allows the following combinations of actions:

|

|

| When Clause | String/Expression/ Suggestion | Specify the matching condition based on the outcome of the ON Condition.

Alternatively, select a clause from the suggestion list. Available options are:

DLP supports the following MERGE INTO operations:

Default value: None. Example: WHEN MATCHED |

| Condition | String/Expression | Specify the additional criteria if needed. The action associated for the

specified condition is not performed if the condition's criteria is not fulfilled.

It can be a combination of both source and target tables, source table only, target

table only, or may not contain references to any table at all. Having this additional condition allows the Snap to identify whether the UPDATE or DELETE action must be performed (since both the actions correspond to the WHEN MATCHED clause). You can also use Pipeline parameters in this field to bind values. However, you must be careful to avoid SQL injection. Default value: None. Example: net-value > 5000 |

| Action | Dropdown list | Choose the action to apply on the condition. Available options are:

Default value: INSERT Example: DELETE |

| Source Type | Dropdown list | Select the type of source from which you want to update the data in your DLP

instance. The available options are:

Default value: Cloud Storage File Example: Input View |

| Source table name | String | Appears when the Source Type is JDBC. Enter the source table name. The default values (database) configured in the Snap’s account for JDBC Account type are considered, if not specified in this field. |

| File format type | Dropdown list | Appears when the Source Type is Cloud Storage

file. Select the file format of the source data file. It can be CSV, JSON, ORC, PARQUET, or TEXT. Default value: CSV Example: PARQUET |

| File Format Option List |

Appears when the Source Type is Cloud Storage file. You can use this field set to choose the file format options to associate with the MERGE INTO operation, based on your source file format. Choose one file format option in each row. |

|

| File format option | String/Expression/ Suggestion | Appears when the Source Type is Cloud Storage

file. Select a file format option from the available options and set appropriate values to suit your MERGE INTO needs, without affecting the syntax displayed in this field. Default value: None. Example: cust_ID |

| Files provider | Dropdown list | Appears when the Source Type is Cloud Storage

file. Declare the manner in which you are specifying the source files list - File list or pattern. Based on your selection in this field, the corresponding fields change: File list fieldset for File list and File pattern field for pattern. Default value: File list Example: pattern |

| File list | Appears when the Source Type is Cloud

Storage file and Files provider is File list. You can use this field set to specify the file paths to be used for the MERGE INTO operation. Choose one file path in each row. |

|

| File | String | Appears when the Source Type is Cloud Storage file and

Files provider is File list. Enter the path of the file to be used for the MERGE INTO operation. Default value: None. Example: cust_data.csv |

| File pattern | String/Expression | Appears when the Source Type is Cloud Storage file and

Files provider is pattern. Enter the regex pattern to use to match the file name and/or absolute path. You can specify this as a regular expression pattern string, enclosed in single quotes. Learn more: Examples of COPY INTO (Delta Lake on Databricks) for DLP. Default value: None. Example: folder1/*.csv |

| Encryption type | String/Expression/ Suggestion | Appears when the Source Type is Cloud Storage

file. Select the encryption type to use for decrypting the source data and/or files staged in the S3 buckets. Note: Server-side encryption is

available only for S3 accounts. Default value: None. Example: Server-Side KMS Encryption |

| KMS key | String/Expression | Source Type is Cloud Storage file and Encryption type is

Server-Side KMS Encryption. Enter the AWS Key Management Service (KMS) ID or ARN to use to decrypt the encrypted files from the S3 location. In case that your source files are in S3, see Loading encrypted files from Amazon S3 for more detail. Default value: None. Example: MF96D-M9N47-XKV7X-C3GCQ-G5349 |

| Number of Retries | Integer/Expression | Specifies the maximum number of retry attempts when the Snap fails to

write. Minimum value: 0 Default value: 0 Example: 3 |

| Retry Interval (seconds) | Integer/Expression | Source Type is Input View Specifies the minimum number of seconds the Snap must wait before each retry attempt.Minimum value: 1 Default value: 1 Example: 3 |

| Manage Queued Queries | Dropdown list | Select this property to determine whether the Snap should continue or cancel

the execution of the queued Databricks SQL queries when you stop the Pipeline. Note:

If you select Cancel queued queries when pipeline is stopped or if it

fails, then the read queries under execution are cancelled, whereas the write

type of queries under execution are not cancelled. Databricks internally

determines which queries are safe to be cancelled and cancels those

queries. Warning: Due to an issue with DLP, aborting an ELT

Pipeline validation (with preview data enabled) causes only those SQL statements

that retrieve data using bind parameters to get aborted while all other static

statements (that use values instead of bind parameters) persist.

To avoid this issue, ensure that you always configure your Snap settings to use bind parameters inside its SQL queries. Default value: Continue to execute queued queries when pipeline is stopped or if it fails. Example: Cancel queued queries when pipeline is stopped or if it fails |

| Snap execution | Dropdown list |

Choose one of the three modes in

which the Snap executes. Available options are:

Default value: Execute only Example: Validate & Execute |

Troubleshooting

| Error | Reason | Resolution |

|---|---|---|

| Missing property value | You have not specified a value for the required field where this message appears. | Ensure that you specify valid values for all required fields. |