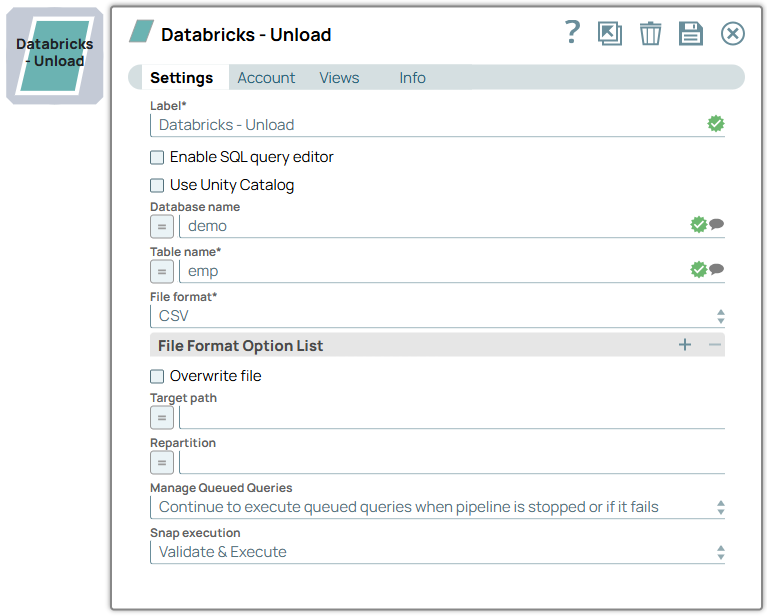

Databricks - Unload

Overview

You can use this Snap to unload data from the tables in your Databricks Lakehouse Platform (DLP) instance to a file in the cloud location. You can save the file in CSV, JSON, PARQUET, ORC, or AVRO format and as multiple partitions if needed. Ensure that you configure a valid target location in the Snap’s account for saving the file in the preferred format.

Write-type Snap

Does not support Ultra Tasks

Prerequisites

- Valid access credentials to a DLP instance with adequate access permissions to perform the action in context.

- Valid access to the external source data in one of the following: Azure Blob Storage, ADLS Gen2, DBFS, GCP, AWS S3, or another database (JDBC-compatible).

Limitations

Snaps in the Databricks Snap Pack do not support array, map, and struct data types in their input and output documents.

Snap views

| View | Description | Examples of upstream and downstream Snaps |

|---|---|---|

| Input | A JSON document containing parameterized inputs for the Snap’s configuration, if needed. | |

| Output | A JSON document containing the unload request details and the result of the unload operation. | |

| Error |

Error handling is a generic way to handle errors without losing data or failing the Snap execution. You can handle the errors that the Snap might encounter when running the pipeline by choosing one of the following options from the When errors occur list under the Views tab. The available options are:

Learn more about Error handling in Pipelines. |

|

Snap settings

- Expression icon (

): Allows using pipeline parameters to set field values dynamically (if enabled). SnapLogic Expressions are not supported. If disabled, you can provide a static value.

- SnapGPT (

): Generates SnapLogic Expressions based on natural language using SnapGPT. Learn more.

- Suggestion icon (

): Populates a list of values dynamically based on your Snap configuration. You can select only one attribute at a time using the icon. Type into the field if it supports a comma-separated list of values.

- Upload

: Uploads files. Learn more.

: Uploads files. Learn more.

| Field / Field set | Type | Description |

|---|---|---|

| Label | String | Required. Specify a unique name for the Snap. Modify this to be more appropriate, especially if more than one of the same Snaps is in the pipeline. Default value: Databricks - Unload Example: ELT RS Account |

| Use unity catalog | Checkbox | Select this checkbox to use the Unity catalog to access data from the

catalog. Default status: Deselected |

| Catalog name | String/Expression/ Suggestion | Appears when you select Use unity catalog. Specify the name of the catalog for using the unity catalog. Default value: hive_metastore Example: xyzcatalog |

| Enable SQL query editor | Checkbox | Select this checkbox to write the SQL statement instead of specifying the

Database name and Table name. Default status: Deselected |

| SQL Query Editor | String/Expression | Appears when you Enable SQL query editor checkbox is selected. Required. Specify the SQL statement, usually the SELECT statement, to indicate the source data for the unload operation. Tip: Avoid specifying multiple SQL statements in this query editor

field. Default value: None. Example: SELECT * from cust_table1 |

| Database name | String/Expression/ Suggestion | Appears when you Enable SQL query editor checkbox is not

selected. Specify the name of the source table’s database in plain text, as an expression, or using the suggestions list for the unload operation. Default value: None. Example: customer_data |

| Table name | String/Expression/ Suggestion | Appears when you Enable SQL query editor checkbox is not selected. Required. Specify the name of the source table in plain text, as an expression, or using the suggestions list. The unload operation is performed on this table when your run the Pipeline. Default value: None. Example: customer_data |

| File format | Dropdown list | Required. Select the file format for the file

to which the source table data must be unloaded. Default value: ORC Example: AVRO |

| File Format Option List | You can use this field set to define the list of file format options to apply while unloading the data. Specify one file format option in each row. The list of file format options available vary based on the File format selected. | |

| File format option | String/Expression/ Suggestion | Enter the file format option with the right syntax or choose from the suggested

list of file format options that is based on the file format selected. Ensure to

edit the option without changing the expected syntax to suit your unloading

requirement. Default value: None. Example: 'nullValue' = '' |

| Overwrite File | Checkbox | Select this checkbox to overwrite the file if it exists in the specified target

path. Default status: Deselected |

| Target Path | String/Expression | Enter the relative path for saving the target file that contains the unloaded

data. Note: Ensure that you have configured the Snap’s account with the details

required to access this target location. Default value: None. Example: /databricks/unloads/csv_files |

| Repartition | String/Expression | Specify the number of partitions (positive integers only) that you need to

store the unloaded data file in the target location. Default value: None. Example: 4 |

| Manage Queued Queries | Dropdown list | Select this property to determine whether the Snap should continue or cancel

the execution of the queued Databricks SQL queries when you stop the

Pipeline. Note: If you select Cancel queued queries when pipeline is stopped or

if it fails, then the read queries under execution are cancelled, whereas

the write type of queries under execution are not cancelled. Databricks internally

determines which queries are safe to be cancelled and cancels those

queries. Warning: Due to an issue with DLP, aborting an ELT

Pipeline validation (with preview data enabled) causes only those SQL statements

that retrieve data using bind parameters to get aborted while all other static

statements (that use values instead of bind parameters) persist.

To avoid this issue, ensure that you always configure your Snap settings to use bind parameters inside its SQL queries. Default value: Continue to execute queued queries when pipeline is stopped or if it fails. Example: Cancel queued queries when pipeline is stopped or if it fails |

| Snap execution | Dropdown list |

Choose one of the three modes in

which the Snap executes. Available options are:

Default value: Execute only Example: Validate & Execute |

Troubleshooting

| Error | Reason | Resolution |

|---|---|---|

| Missing property value | You have not specified a value for the required field where this message appears. | Ensure that you specify valid values for all required fields. |